Inference gets the attention, but continuous sensor data drives memory, bandwidth, and power constraints. compressionKIT™ reduces data at the source—enabling more efficient, scalable edge AI systems

The past few years of edge AI development have delivered major gains in inference efficiency. Models that once required server-class hardware now run on nano-sized chips, completing inference in milliseconds on a coin-cell budget.

But there’s a second cost center that gets far less attention: the overhead of handling continuous sensor data.

Always-on devices don’t just run inference—they generate data nonstop. A smartwatch streaming PPG doesn’t pause between heartbeats. An ECG patch doesn’t sleep. Smart rings, hearables, and other sensors continuously produce data that must be stored, transmitted, or processed.

That creates a system-level burden:

- Stored locally, data quickly fills limited memory

- Transmitted wirelessly, it becomes a major source of power consumption

- Sent to the cloud, it drives bandwidth and infrastructure costs

At scale, these costs compound across millions of devices—and often rival or exceed the cost of running inference itself.

compressionKIT is Ambiq’s answer to this challenge. It’s an AI-based codec that compresses continuous sensor streams at the source—before data is stored, transmitted, or analyzed—while preserving the signal structure needed for downstream processing. Watch our demo or read our technical overview below:

How compressionKIT Works

compressionKIT encodes raw sensor data into a compact representation using a model trained on real-world signal conditions. Instead of applying fixed mathematical transforms, it learns which parts of a signal carry meaningful information and prioritizes preserving those features during compression.

The decoder reconstructs the signal based on the selected compression level—ranging from near-lossless to highly compact representations that still retain the core waveform structure needed for downstream processing.

Controlling Compression vs. Fidelity

Compression is adjustable, with targets ranging from 2× to 16× (and up to ~20× with entropy encoding)1. Each step reduces data volume while introducing some reconstruction error.

We evaluate this tradeoff using PRD (Percent Root-Mean-Square Difference), which measures how much the reconstructed signal deviates from the original:

- 2× compression: ~4.8% error, visually indistinguishable from the original

- Higher compression levels: increased error, but core signal structure remains intact

This allows developers to choose the right balance between data size, signal quality, and system constraints based on their application.

At 16× compression, error increases to ~11%, but the fundamental signal structure remains intact—reducing transmitted data by ~95% and delivering significant power savings.

Because BLE transmission is often one of the largest power consumers in wearable devices, reducing data volume directly extends battery life. For applications where signal fidelity is critical—such as clinical-grade wearables and diagnostic patches—lower compression settings maintain sub-5% error while still delivering meaningful reductions in bandwidth and memory.

Compression that also Denoises

One of the key advantages of an AI-based codec is its ability to handle noise.

Traditional codecs compress everything in the signal—both meaningful structure and unwanted artifacts. In contrast, compressionKIT is trained on real-world data with varying noise conditions, allowing it to distinguish between signal features worth preserving and noise that can be removed.

As a result, compression and denoising happen in a single pass—no additional processing stages or compute required.

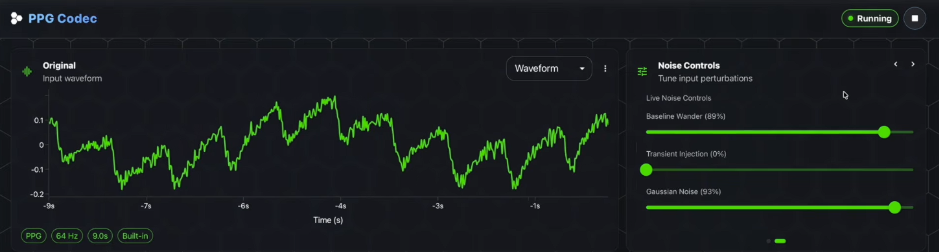

In the live demo, adding baseline wander and Gaussian noise to the input PPG produces a visibly degraded waveform:

The reconstructed output remained clean:

No separate denoising stage and no additional compute—the codec handles both simultaneously. For wearables operating in real-world conditions, where motion artifacts and electrical interference are constant, this provides a clear advantage over traditional fixed-transform approaches.

Deployment Flexibility

compressionKIT supports two implementation paths: a hybrid DSP + ML approach for efficient on-device deployment, and an AI-first neural compression mode for maximum data reduction.

These compressed representations can be used in multiple ways:

- On-device inference directly on compressed data

- Compressed cloud upload for deeper analysis and model refinement

- Hybrid edge-cloud pipelines that balance latency, power, and compute

This flexibility is especially important for teams building longitudinal health and sensing applications.

Continuous sensor data is expensive to store and slow to transmit at scale. By compressing data at the source, compressionKIT enables long-duration monitoring—weeks or even months of continuous data—at a fraction of the storage and bandwidth cost.

On-Device performance

compressionKIT includes a live dashboard that streams data directly from Ambiq hardware over USB, allowing teams to evaluate compressed and reconstructed signals in real time using their own devices—not just pre-recorded datasets.

Representative performance (demo):

- Encode latency: 4.1 ms

- Power: 31.7 mW per inference

- Memory footprint: ~21 KB

At this scale, compressionKIT can be integrated into existing sensor processing pipelines with minimal impact on system scheduling or resource budgets.

Signal Support and Availability

compressionKIT reflects a broader shift in edge AI: optimizing not just inference, but the entire data pipeline from sensor to insight.

By reducing the cost of continuous data—across memory, bandwidth, and power—it enables more practical always-on systems and makes long-duration sensing at scale achievable.

This applies across a wide range of use cases, including:

- Physiological signals (PPG, ECG)

- Motion sensing (accelerometers)

- Audio and other continuous data streams

- Emerging multimodal edge AI systems

The current release focuses on PPG, ECG, and accelerometer data—the most common signals in wearable devices.

compressionKIT is currently in beta, and Ambiq is working with early partners to evaluate performance using real-world sensor data and product constraints.

By addressing the cost of data—not just inference—compressionKIT helps move edge AI from isolated use cases to scalable, always-on intelligence.

To learn more about compressionKIT, read our press release, visit our website.

¹ Baseline assumes uncompressed transmission of raw data. Results were measured on the Apollo510 using two-channel PPG sampled at 64 Hz in 4-second windows. Reported compression combines a fixed 16× reduction from the compressionKIT model with a dynamic entropy encoder, achieving average compression ratios of up to 20× over one minute of data. The data compression ratio at 20x is not deterministic.