heliaAOT – Ahead-of-Time Compiler for Ultra-Low Power Edge AI

Blazing Fast Neural Inferencing

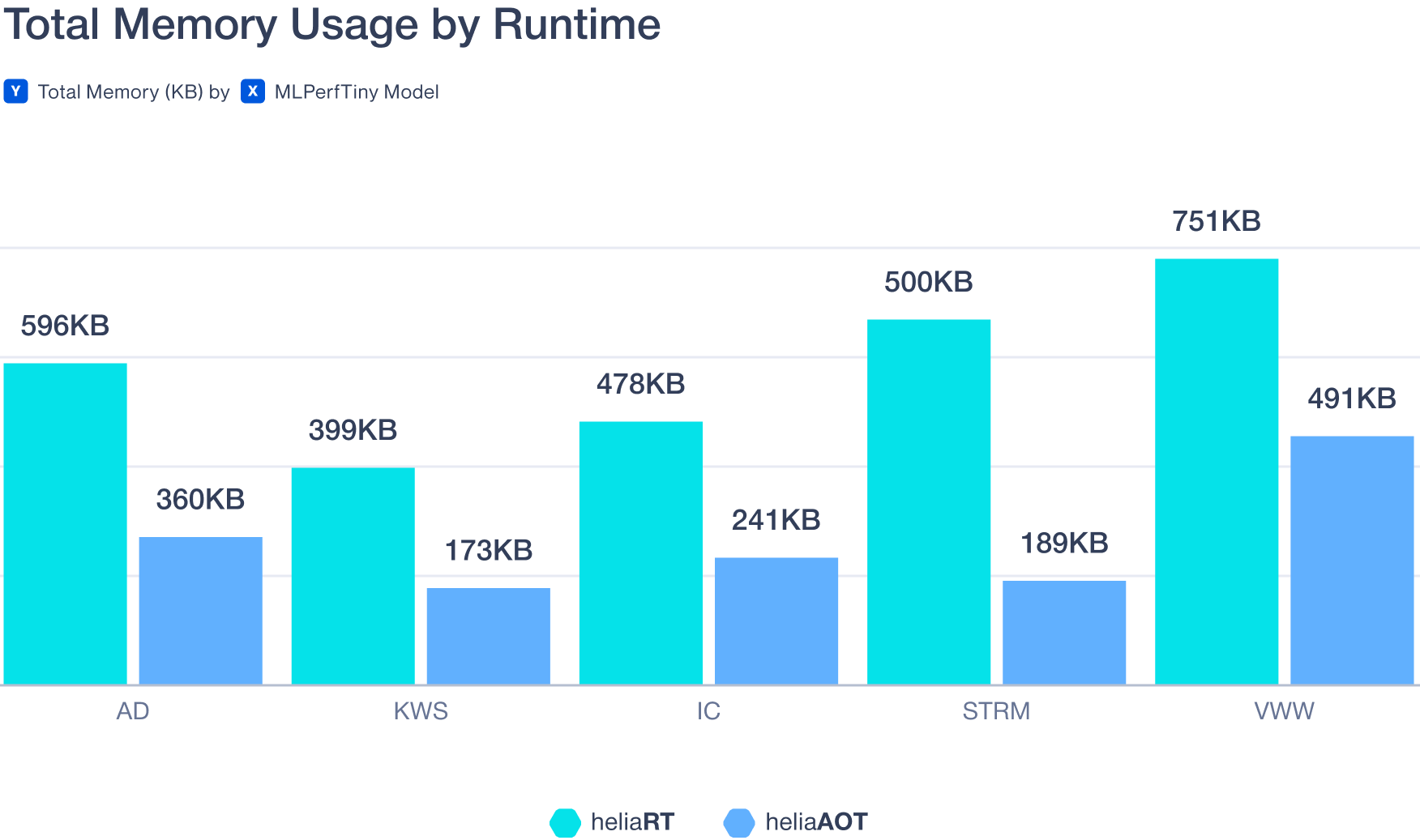

heliaAOT™ is an ahead-of-time AI compiler that converts LiteRT models into highly optimized, standalone C inference modules tailored for Ambiq’s ultra-low-power SoCs. Designed for embedded and edge AI applications, heliaAOT reduces memory usage, minimizes runtime overhead, and accelerates AI inference performance for wearables, healthcare devices, smart home products, and industrial IoT systems.